The Present and Future of MCP(Model Context Protocol)

In the rapidly evolving landscape of artificial intelligence, a new standard has emerged that's quietly revolutionizing how AI agents interact with tools and capabilities. The Model Context Protocol (MCP) has grown from an overlooked concept to a central pillar in AI development seemingly overnight. But what exactly is driving this sudden interest, and why should developers and AI enthusiasts take notice?

The Unexpected Journey of MCP

When the Model Context Protocol was first introduced in November 2023, it received little attention from the developer community. Initially described as "the future of LLM Agent Tools," MCP was largely dismissed as just another collection of tools for large language models - nothing more revolutionary than a basic toolbox.

This lack of interest stemmed from two key factors. First, MCP appeared to be merely a collection of tools without significant differentiation from existing solutions. Second, and perhaps more importantly, no major frameworks supported MCP integration at the time, limiting its practical application.

The turning point came in early 2024 when Cursor.AI, one of the leading automated coding tools, announced full MCP integration into their system. This endorsement from a prominent platform sparked widespread interest, transforming MCP from an overlooked concept to one of the hottest topics in AI development within just a few months of its release.

Why MCP Matters Now

The sudden surge in popularity isn't just about following trends. MCP represents something more fundamental: a standardization layer that promises to make AI tools and agents more interoperable and extensible. By creating a common protocol for how AI models interact with their context and tools, MCP enables developers to build capabilities that can work across different frameworks and models.

This approach is comparable to how USB-C standardized hardware connections, allowing devices from different manufacturers to work seamlessly together. Similarly, MCP aims to decouple AI capabilities from specific frameworks, fostering a more collaborative and innovative ecosystem.

For developers, this means the ability to create tools once and deploy them across multiple AI agents. For users, it promises a more consistent and powerful experience as AI systems can leverage a broader range of specialized capabilities.

The rise of MCP signals a maturing AI landscape where standardization and interoperability are becoming as important as raw capabilities. As more platforms adopt this protocol, we're likely to see an explosion of specialized tools and capabilities that can be mixed and matched across different AI systems.

In the next section, we'll dive deeper into exactly what MCP is, exploring its technical underpinnings and how it's changing the way developers approach AI agent construction.

The Evolution of AI Agents: Why We Need a Universal Standard

The Challenge of Building Modern AI Systems

Today's AI agents represent remarkable technological achievements. From simple chatbots to sophisticated autonomous systems, these digital assistants can understand their environment, reason through complex problems, and take meaningful actions to achieve goals. However, behind this seamless facade lies a complex integration challenge.

Building effective AI agents typically requires connecting a powerful Large Language Model (LLM) with various external tools and data sources. These might include:

- Web search capabilities

- Code execution environments

- Database connections

- Popular app integrations (Slack, Gmail, Calendar)

- Custom functions for specialized tasks

Until recently, developers faced a significant hurdle: these integrations often required commitment to specific frameworks like LangChain or LlamaIndex. While these frameworks offer valuable pre-built components, they created a fragmented ecosystem where tools were tightly bound to their parent frameworks.

The Compatibility Problem

This fragmentation created real-world limitations:

- Tools built for LangChain might not work with agents developed in other frameworks

- Developers had to recreate similar functionality across different ecosystems

- Innovation was constrained by framework boundaries

- Users couldn't easily mix and match the best tools from different sources

If this situation sounds familiar, it's because we've seen it before in hardware. Remember the days of proprietary charging cables and connectors? Each manufacturer had their own standard, creating confusion and waste. The introduction of USB, and later USB-C, transformed the hardware landscape by establishing a universal connection standard.

Enter the Model Context Protocol (MCP)

This is where the Model Context Protocol (MCP) comes in. Introduced by Anthropic in late 2023, MCP aims to be the "USB-C" for AI capabilities. It creates a standardized way for AI agents to discover and interact with external tools and data sources, regardless of which framework was used to build either component.

While MCP didn't immediately gain widespread attention upon its release, its adoption has accelerated significantly in early 2024. This surge in popularity, particularly following its integration with tools like Cursor AI, signals a potential turning point in how we build and connect AI systems.

In the following sections, we'll explore exactly what MCP is, why it's gaining traction now, and how it might reshape the future of AI agent development.

Understanding the Structure of the Model Context Protocol (MCP)

The Foundation: It's All About the Protocol

At its core, MCP (Model Context Protocol) is exactly what its name suggests - a protocol. This isn't just semantics; the protocol aspect is fundamental to understanding MCP's value. Just as HTTP standardized web communication, MCP establishes clear rules for how AI agents interact with external tools and capabilities.

The Architecture of MCP

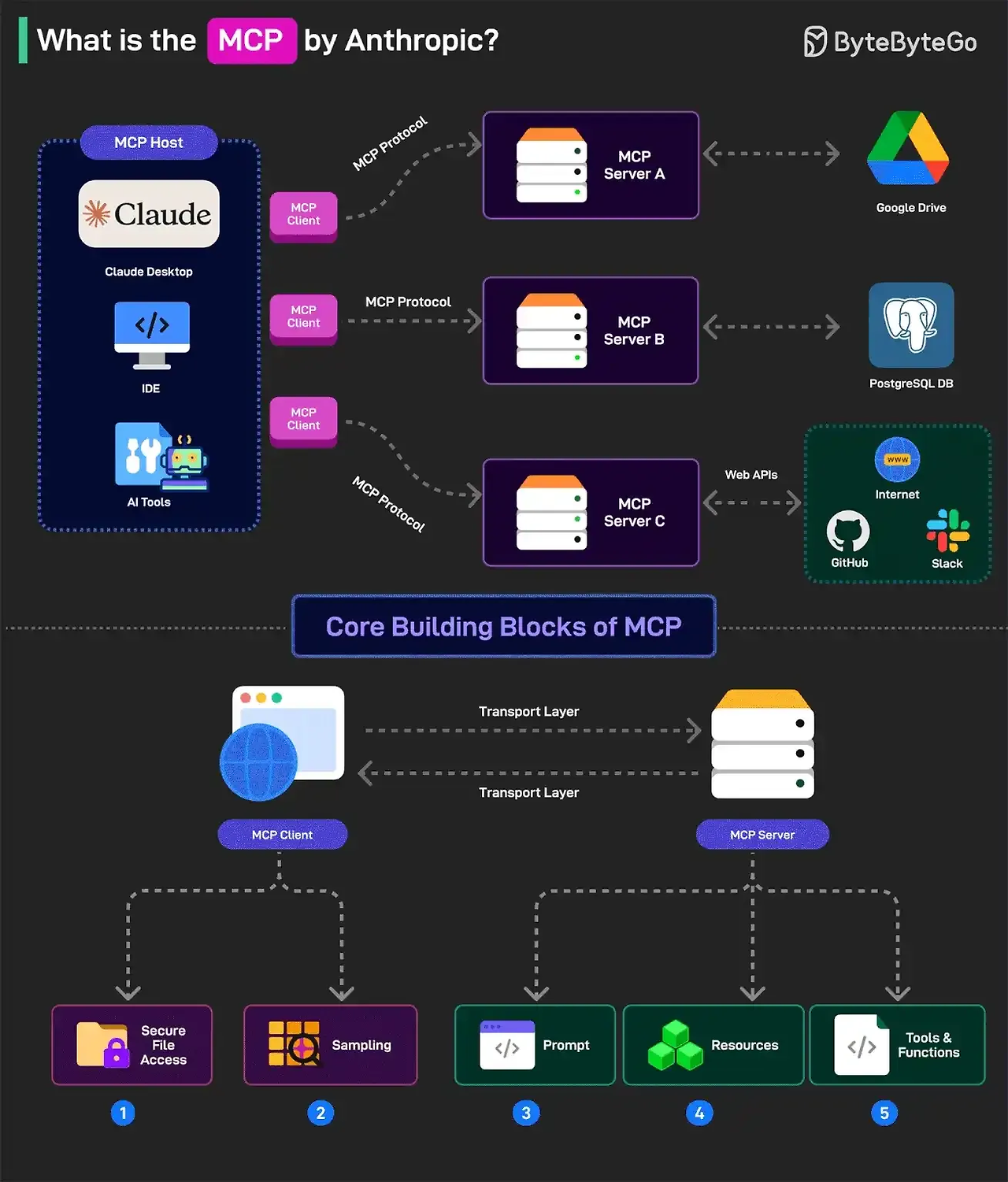

MCP's structure can be best understood through its key components and how they interact:

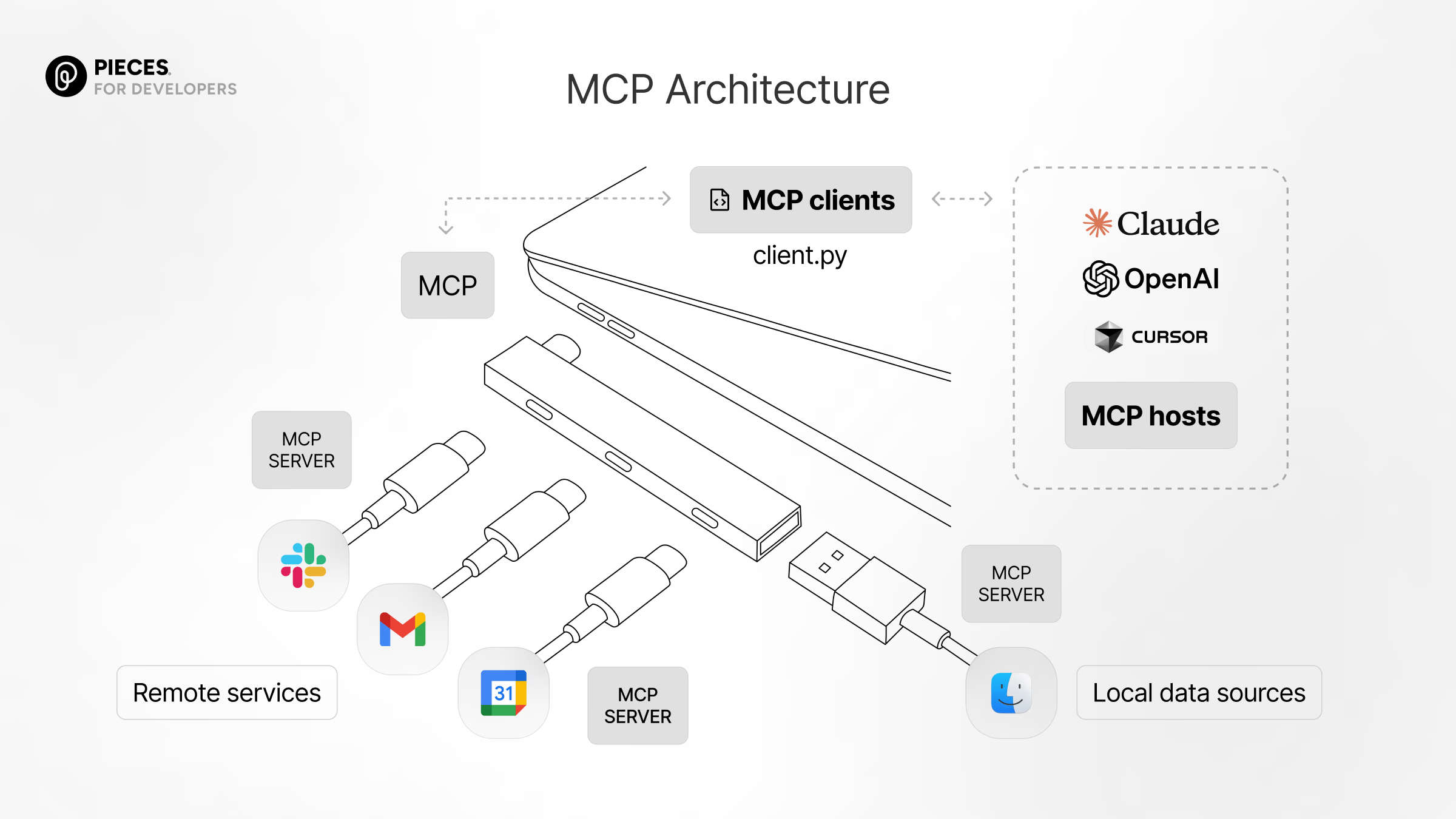

1. MCP Clients (Hosts)

- These are AI agents or applications that need to use external capabilities

- Examples include Anthropic's Claude Desktop and Cursor AI

- Clients implement the MCP standard to discover and call tools

- Think of these as devices with USB-C ports (laptops, phones) in our hardware analogy

2. MCP Servers

- These provide the actual tools or capabilities

- They can include integrations with services like Slack, Gmail, or Google Calendar

- They also encompass local data sources, web search capabilities, or custom systems

- Servers advertise their capabilities and respond to requests from clients

- In our analogy, these are like USB-C accessories (chargers, drives)

3. The MCP Interface

- This is the standardized protocol itself - the "plug and socket" specification

- It defines how clients request actions and how servers provide results

- This standardization allows any MCP client to interact with any MCP server

How MCP Fits into Agent Architecture

MCP integrates seamlessly with the common AI agent architecture based on the ReAct (Reasoning and Acting) framework:

- LLM Core: The central reasoning engine (GPT-4, Claude 3, etc.)

- Tools: External capabilities the LLM can access

- Thought: The LLM decides if and which tool to use

- Action: The LLM formulates a request to the chosen tool

- Observation: The tool executes the request and returns results

- Loop/Final Answer: The LLM uses the results to continue reasoning or generate a final answer

MCP primarily standardizes the critical interface between the Action step and the Observation step. This means AI agents don't need custom code for each specific tool - they can interact with all MCP-compliant tools through the same protocol.

Beyond Just Tools

While tools are the most visible aspect of MCP, the protocol encompasses several key elements:

- Tools: Functions, parameters, and descriptions that help the LLM understand and select them

- Resources: Access to contextual data like project files

- Prompts: Standardization of certain prompting strategies

- Server/Client Discovery: Mechanisms for clients to find available servers

The tool standardization has been the primary driver of MCP's growing popularity, offering the most immediate and practical benefits to developers and users alike.

By establishing this universal structure, MCP eliminates the need to reinvent integration patterns for each new tool or framework, allowing developers to focus on creating powerful capabilities rather than solving compatibility issues.

How Cursor AI Sparked the MCP Revolution

The Tipping Point for Model Context Protocol

While the Model Context Protocol (MCP) was introduced in November 2023, it initially received little attention from the broader developer community. The protocol's true potential remained largely theoretical until a critical turning point: Cursor AI's integration of MCP in February 2024. This single implementation catalyzed a surge in popularity that transformed MCP from an interesting concept into a rapidly growing standard.

Why Cursor AI Made All the Difference

Cursor AI's adoption of MCP served as a powerful catalyst for several key reasons:

1. Large, Relevant User Base

Cursor AI isn't just any application - it's a specialized code editor designed specifically for AI-assisted development. By implementing MCP, Cursor exposed the protocol to:

- Thousands of developers actively seeking AI enhancements

- A community already comfortable with cutting-edge AI technologies

- Users who could immediately see the practical benefits

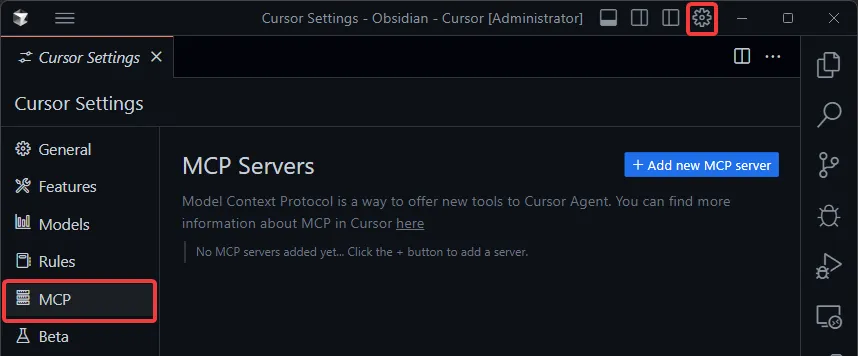

2. Practical Demonstration with a User-Friendly Interface

Cursor AI didn't just implement MCP theoretically - it made it accessible:

- The application featured an intuitive settings page where users could easily add and enable MCP servers

- This simple interface demonstrated how external tools could be integrated without requiring deep framework knowledge

- Users could experience the benefits directly rather than just reading about the concept

3. Powerful Validation from a Trusted Tool

Cursor AI's reputation lent significant credibility to MCP:

- As a popular, fast-moving tool in the AI development space, Cursor's adoption signaled that MCP was production-ready

- This validation shifted perception of MCP from a theoretical standard to a practical, valuable one

- Other developers and platforms took notice, recognizing that if Cursor found value in MCP, they should explore it too

The Network Effect Kicks In

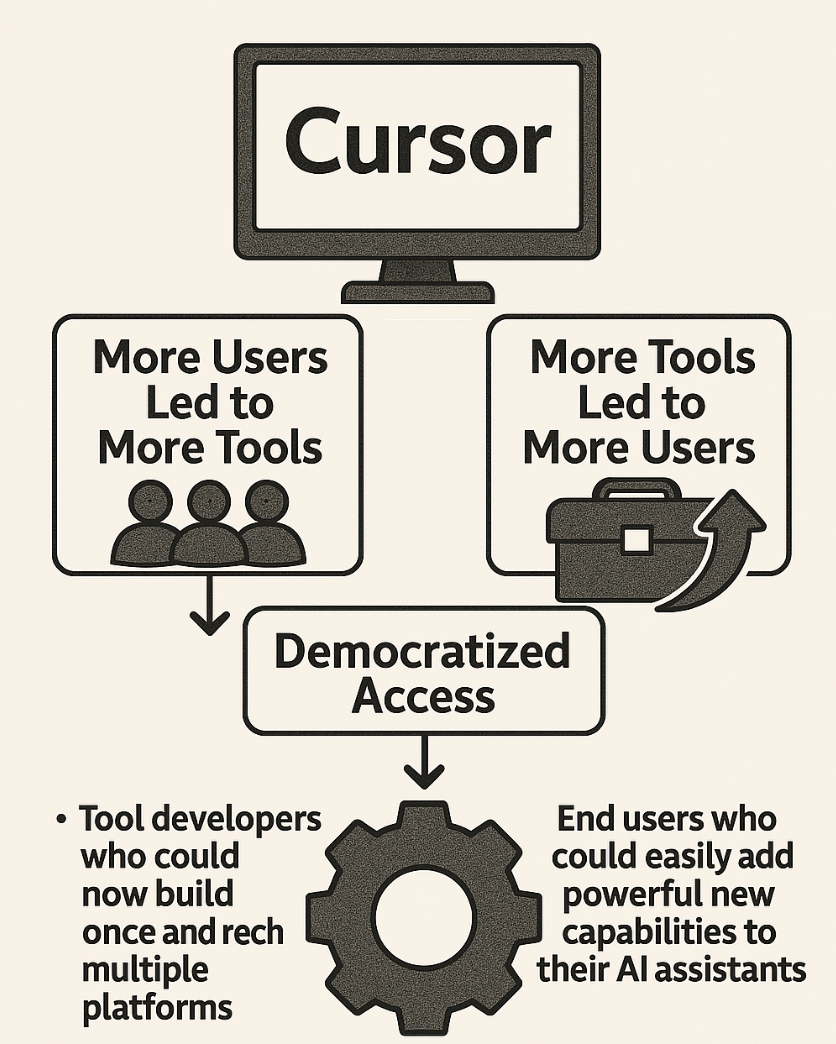

After Cursor AI's implementation, MCP began experiencing the powerful "network effects" that characterize successful standards:

- More Users Led to More Tools: As Cursor's large user base began working with MCP, developers recognized an opportunity to create tools for this growing audience

- More Tools Led to More Users: As the ecosystem of available MCP tools expanded, more users were drawn to MCP-compatible applications like Cursor

- Democratized Access: Cursor's implementation showed that using MCP was straightforward, lowering the barrier to entry for both:

- Tool developers who could now build once and reach multiple platforms

- End users who could easily add powerful new capabilities to their AI assistants

From Concept to Standard

Before Cursor's adoption, MCP was primarily of interest to framework developers or those building custom AI agents. After Cursor's implementation, it became relevant to everyday developers and users.

This shift made the benefits of MCP tangible and immediately valuable to a much wider audience. The protocol transformed from an interesting academic concept into a practical standard that solved real problems for real users, setting the stage for its rapid adoption across the AI development ecosystem.

MCP's Impact on the AI Ecosystem

As the Model Context Protocol (MCP) continues to gain momentum, it promises to fundamentally reshape how AI agents are built, distributed, and used. This standardization brings both significant opportunities and potential challenges that developers, businesses, and users should consider.

Positive Impacts: Opening New Possibilities

The emergence of AI tool marketplaces like Smithery represents MCP's most transformative potential. These platforms enable developers to share, discover, and monetize specialized AI tools, creating a sustainable ecosystem that rewards innovation. This marketplace approach drives continuous improvement that wasn't possible in the pre-MCP landscape.

MCP significantly reduces redundant work by allowing developers to leverage pre-built, standardized tools. Common integrations no longer need rebuilding for each framework, eliminating duplicate effort and allowing teams to focus on their unique value propositions rather than solving the same connectivity problems repeatedly.

The protocol enables unprecedented specialization. Developers can create highly optimized tools for specific tasks that work across the entire MCP ecosystem. Domain experts can translate specialized knowledge into accessible tools, while global developers can create solutions for underserved markets, knowing their work can reach any MCP-compatible client.

For end users, the benefits are clear: more versatile AI systems, access to specialized capabilities, and more intuitive interfaces across different applications. This makes advanced AI more accessible to non-technical users and increases the overall utility of AI assistants.

Potential Challenges: Growing Pains

Despite its promise, MCP faces significant hurdles. Security concerns are paramount—users need reliable ways to trust MCP servers before integration. Without robust safeguards against malicious tools, adoption could stall, and questions of liability remain unresolved when tools mishandle sensitive data.

Quality control becomes increasingly difficult as available tools multiply. Without proper curation systems, users will struggle to identify reliable options among thousands. Marketplaces must establish clear standards for listings while the community develops approaches for handling abandoned or subpar tools.

Technical challenges include maintaining compatibility as the standard evolves, managing version conflicts, and ensuring consistent performance across implementations—inconsistencies could undermine confidence in the standard itself.

Finally, MCP's success depends on broad industry adoption. While Anthropic and Cursor AI have embraced it, other major players like OpenAI and Google remain uncertain. Framework developers must balance existing ecosystems with MCP integration, likely causing temporary fragmentation as platforms adopt at different paces.

MCP: Standardizing the Future of AI Agent Development

A New Chapter in AI Interoperability

The Model Context Protocol (MCP) represents a significant milestone in AI's evolution - not just another technical specification, but a fundamental shift toward standardization in how AI agents interact with the world. Just as USB-C transformed hardware connectivity by providing a universal interface, MCP creates a standardized way for AI agents to discover and utilize external tools and capabilities.

From Concept to Movement

Though introduced in late 2023, MCP's true potential remained largely theoretical until early 2024, when Cursor AI's implementation brought it into the practical realm. This critical adoption validated the protocol and demonstrated its value to a wide audience of developers. The resulting surge in popularity wasn't merely coincidental - it was the natural consequence of MCP addressing a genuine need in the AI development ecosystem.

The Ecosystem Effect

MCP's growing influence has already begun reshaping the AI landscape:

- Tool Marketplaces: Platforms like Smithery are becoming vibrant hubs where developers share, discover, and potentially monetize MCP-compatible tools

- Framework Evolution: Traditional frameworks like LangChain are adapting to complement rather than compete with MCP

- Developer Empowerment: Creators worldwide can now build specialized tools that reach a global audience through a standardized interface

- User Accessibility: Even non-technical users can enhance their AI assistants with powerful capabilities through intuitive interfaces

The Road Ahead

While challenges remain in security, quality control, and broader adoption, MCP's trajectory suggests it will become a foundational element in AI agent development. The future it enables is one where creating sophisticated AI systems becomes less about custom integration work and more about thoughtfully combining standardized, specialized capabilities.

As the protocol matures and its ecosystem grows, we can expect to see increasingly powerful and accessible AI agents that can seamlessly leverage the collective innovation of a global developer community. MCP isn't just changing how we build AI - it's democratizing access to advanced AI capabilities and accelerating the pace of innovation across the entire field. In the evolving story of artificial intelligence, MCP represents a crucial chapter - one that moves us from fragmentation toward collaboration, from closed systems toward open standards, and from limited capabilities toward boundless potential.

I want you guys to guess what Fission team is gonna do to implement MCP in web3. How might this standardized protocol transform decentralized applications and autonomous agents in the blockchain space? The collision of MCP's interoperability with web3's permissionless innovation could open entirely new frontiers for AI capabilities. What possibilities do you see at this intersection?