AI Agent with Robotics

The Dawn of Generalist Humanoid Robots

In the rapidly evolving field of artificial intelligence, one of the most exciting frontiers is the development of generalist humanoid robots capable of performing a wide range of tasks in human environments. Recent breakthroughs in hardware design, model architecture, and training methodologies have brought us closer than ever to realizing this vision. This blog post explores the current state of robotics AI agents, focusing on NVIDIA's groundbreaking GR00T N1 model, and examines both the possibilities and challenges that lie ahead.

The Rise of Robot Foundation Models

Just as foundation models like GPT and CLIP have revolutionized natural language processing and computer vision, similar approaches are now being applied to robotics. These robot foundation models aim to provide a versatile "backbone" of intelligence that can reason about novel situations, handle real-world variability, and quickly adapt to new tasks.

NVIDIA recently introduced GR00T N1, an open foundation model specifically designed for humanoid robots. GR00T (Generalist Robot Transformer) represents a significant step toward creating robots that can operate in complex, unstructured environments and perform a diverse array of tasks through a unified learning framework.

What Makes GR00T N1 Special?

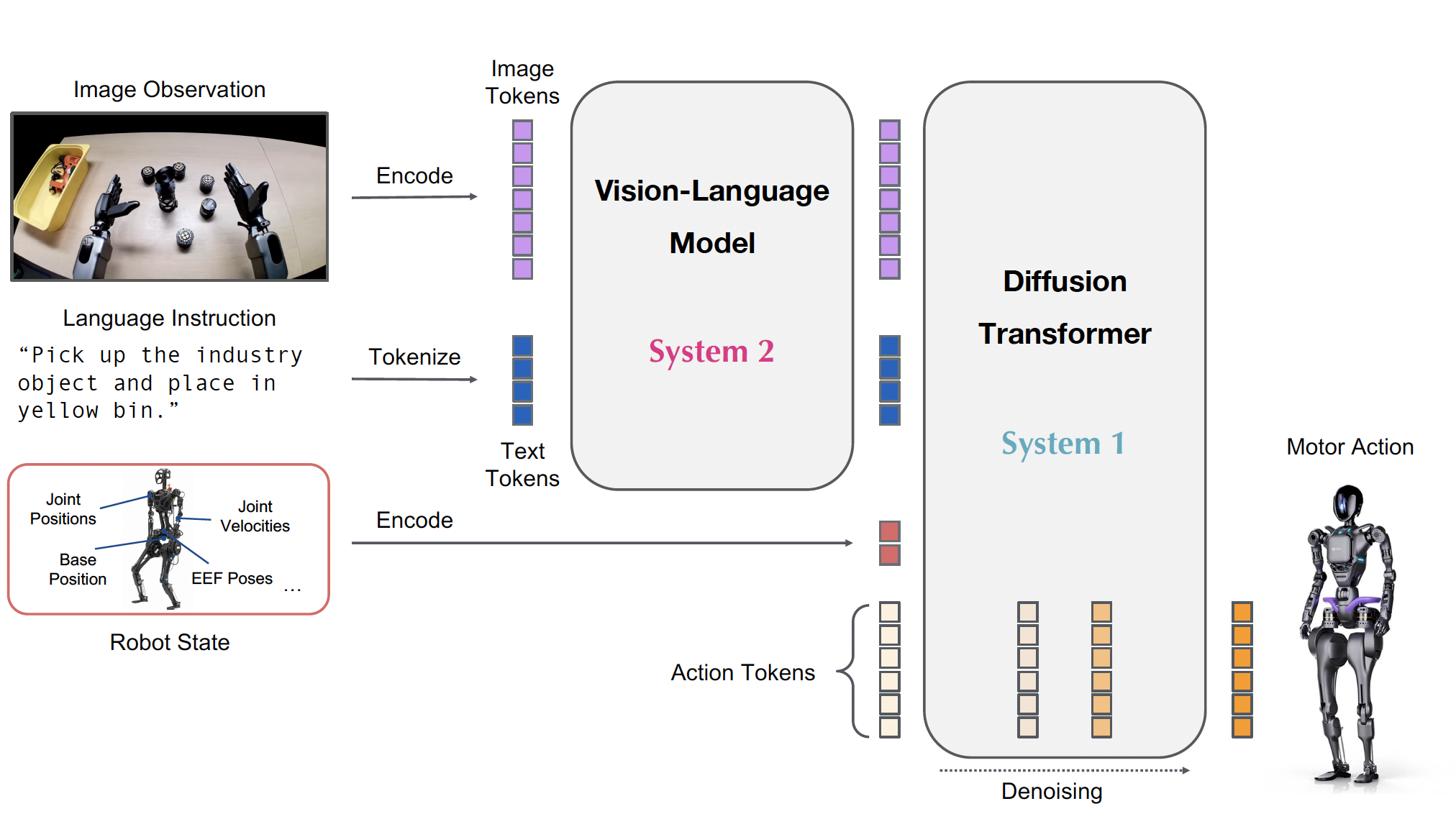

GR00T N1 is built on a Vision-Language-Action (VLA) framework with a dual-system architecture inspired by human cognition:

- System 2: Vision-Language Module - Interprets the environment through vision and language instructions, running at 10Hz on an NVIDIA L40 GPU.

- System 1: Diffusion Transformer Module - Generates fluid motor actions in real-time at 120Hz, enabling smooth, precise movements based on the high-level understanding from System 2.

Both modules are tightly coupled and jointly trained end-to-end, allowing for seamless coordination between reasoning and actuation.

The model architecture addresses a key challenge in robotics AI: learning across diverse embodiments with different sensors, actuators, and control methods. GR00T N1 uses a compositional approach with:

- Vision-Language Backbone: Built on NVIDIA's Eagle-2 VLM model, which processes visual information and language instructions

- Diffusion Transformer: Employs an action flow-matching technique to generate coherent motion sequences

- Embodiment-Specific Encoders/Decoders: Enable the model to work across different robot platforms, from tabletop arms to full humanoids

The Data Strategy Optimized at Robotics

One of GR00T N1's most innovative contributions is its approach to the "data island" problem in robotics. Unlike language or vision models, which can train on internet-scale datasets, robot data is fragmented across different hardware platforms, environments, and tasks.

NVIDIA's solution is a heterogeneous data pyramid:

- Base Layer: Large quantities of web data and human videos provide broad visual and behavioral priors

- Middle Layer: Synthetic data generated through physics simulations and neural models

- Top Layer: Real-world data collected on physical robot hardware

This pyramid approach maximizes learning from diverse data sources, with each layer serving a different purpose in the model's development. To utilize action-less data sources like human videos, NVIDIA researchers developed techniques to extract latent actions and generate pseudo-actions, effectively treating them as additional robot embodiments.

Real-World Performance and Applications

The GR00T N1 model has demonstrated impressive results across multiple evaluation benchmarks:

- Simulation Performance: Outperformed state-of-the-art imitation learning baselines on standard simulation benchmarks across various robot embodiments.

- Real-World Deployment: Successfully performed language-conditioned bimanual manipulation tasks on the Fourier GR-1 humanoid robot, showing strong data efficiency.

- Generalization Capabilities: Exhibited the ability to transfer skills between different robot platforms and adapt to novel task variations.

Applications range from pick-and-place operations to more complex bimanual tasks requiring coordination between arms and hands.

Real-World Results: Does It Actually Work?

The million-dollar question: can this thing actually do useful stuff?

Early results look promising. GR00T N1 outperformed other state-of-the-art systems in simulation benchmarks and successfully performed real-world tasks on the Fourier GR-1 humanoid robot. The system showed particular strength in language-conditioned tasks - tell it what to do in plain English, and it figures out the rest. What's especially noteworthy is the model's data efficiency. Rather than needing thousands of examples, GR00T N1 can learn new tasks from relatively few demonstrations - crucial for practical deployment.

Despite the impressive progress, several major challenges still stand between us and having useful humanoid robots in our homes and workplaces:

1. The Crushing Cost Reality

Let's talk money, because that's ultimately what determines whether technologies move from research labs to the real world.

The economics of advanced robotics are brutal. GR00T-N1-2B alone reportedly consumed approximately 50,000 H100 GPU hours just for pretraining. At current cloud computing rates, that's easily hundreds of thousands of dollars in compute costs before you've even touched real-world deployment.

Then there's the hardware. Current humanoid robots cost anywhere from $100,000 to several million dollars each. The Fourier GR-1 robot used for testing GR00T N1 isn't something you'll find on Amazon with free shipping.

This cost structure creates a paradox: we need widespread adoption to drive costs down, but the high costs prevent widespread adoption. Until we break this cycle, humanoid robots will remain primarily in the domain of well-funded research labs and corporations.

2. Latency: The Silent Dream-Killer

Latency might sound like a boring technical detail, but it's actually one of the biggest roadblocks to effective robotic systems.

Here's the problem: GR00T N1's vision-language module runs at just 10Hz—that's processing visual information and language instructions only 10 times per second. While the action generation happens at a faster 120Hz, this "thinking" bottleneck creates very real limitations.

For context, human visual processing operates at the equivalent of hundreds of hertz, and our reflexes can respond in milliseconds. This means current robotic systems still have significantly slower reaction times than humans, making them struggle with:

- Fast-moving objects or dynamic environments

- Quick adjustments to unexpected events like slippage or collisions

- Time-sensitive coordination tasks between multiple robots

- Rapid human-robot interactions requiring immediate responses

The inference time for GR00T N1 to sample just 16 actions is about 64ms on an L40 GPU. That might sound fast, but in robotics, those milliseconds can make the difference between a successful grasp and a dropped object.

3. The Ethical and Social Minefield

As if the technical challenges weren't enough, the deployment of increasingly capable robots raises serious questions:

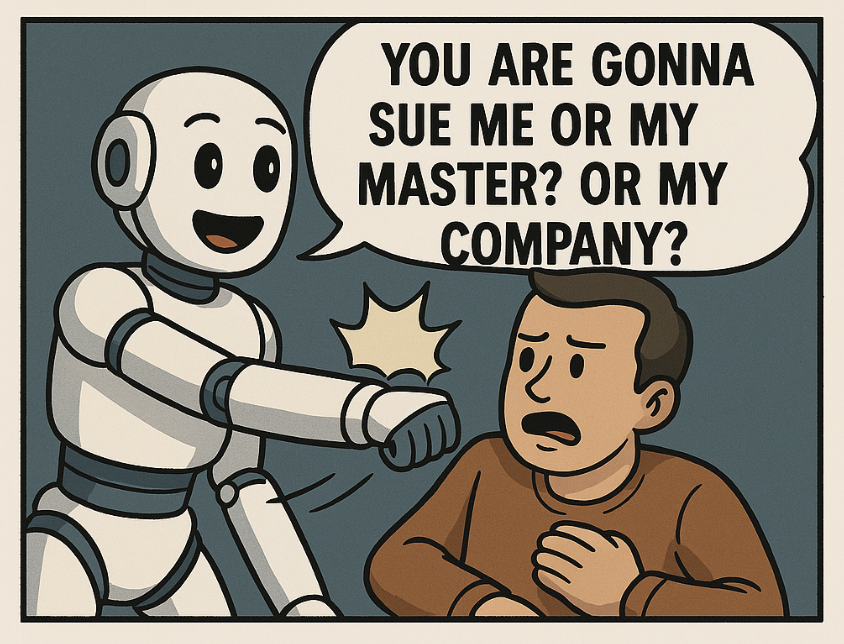

- Who's responsible? If a semi-autonomous robot causes harm, is it the manufacturer, the software developer, the owner, or some combination?

- What about bias? AI systems often inherit biases from their training data, which in robotics could affect who gets help or how tasks are prioritized.

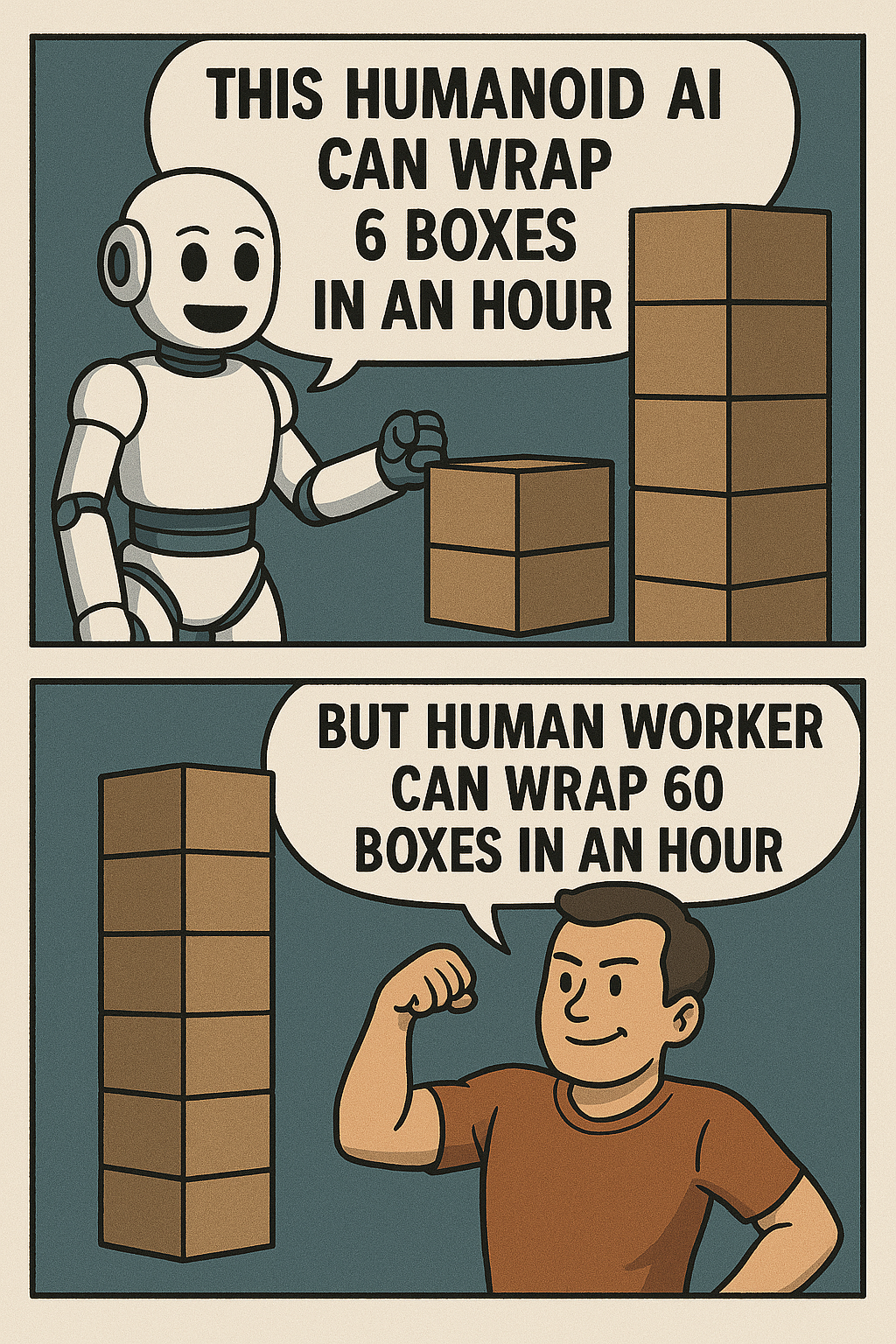

- Job disruption? Let's not pretend this isn't a concern. As robots become more capable, certain jobs will change dramatically or disappear entirely.

The legal frameworks for dealing with these issues are still playing catch-up with the technology.

The Path Forward: Reasons for Cautious Optimism

Despite these very real challenges, there are good reasons to believe that generalist humanoid robots will continue to improve:

- Better Vision-Language Models: As the backbone of systems like GR00T N1, improvements in vision-language models will directly enhance robot perception and understanding.

- More Sophisticated Simulation: Advances in physics simulation and synthetic data generation will help bridge the sim-to-real gap, making training more efficient.

- Hardware Improvements: More energy-efficient processors, better sensors, and improved actuators will expand what's physically possible.

- Collaborative Development: Open foundation models like GR00T N1 allow researchers worldwide to build upon shared progress rather than starting from scratch.

The Bottom Line

NVIDIA's GR00T N1 represents a significant step forward in robotics AI, showing that foundation models can successfully transfer to the physical domain. The system's ability to work across different robot embodiments and learn efficiently from limited data is particularly promising.

However, we should temper our expectations with realism. The challenges of data scarcity, real-time control, ethical deployment, and system integration are substantial. These aren't minor bugs to fix but fundamental obstacles that will require concerted effort from researchers, engineers, policymakers, and ethicists.

The robots of science fiction - adaptable helpers that seamlessly integrate into our lives - remain on the horizon rather than in our living rooms. But with approaches like GR00T N1, that horizon is looking closer than ever before.

What do you think? Are generalist humanoid robots going to transform our world in the next decade, or are we still underestimating the challenges? The comments section awaits your hot takes and cool insights.